Tensors are the backbone of modern deep learning, serving as the data structures that power neural networks. Understanding tensor calculus is crucial for anyone looking to delve deeper into the mechanics of these systems. This topic unfolds the intricacies of how tensors operate, their historical significance, and the role they play in enhancing neural network architectures.

From basic operations to advanced concepts, tensor calculus not only provides the mathematical foundation necessary for programming deep learning algorithms but also underlines its applications in real-world scenarios. Here, we will explore how tensor calculus acts as a bridge connecting abstract mathematical theories with practical computing solutions.

Introduction to Tensor Calculus

Tensor calculus is a branch of mathematics that extends vector calculus to higher dimensions, focusing on the manipulation and application of tensors. Tensors are multi-dimensional arrays that generalize scalars, vectors, and matrices, providing a powerful framework for understanding complex data structures. Their significance in deep learning frameworks cannot be overstated; they form the backbone of data representation and operations in machine learning models.

The history of tensor calculus can be traced back to the work of mathematicians like Gregorio Ricci-Curbastro and Tullio Levi-Civita in the late 19th century, which laid the foundation for modern applications in physics and engineering.

The Importance of Tensor Calculus in Deep Learning Frameworks

Tensor calculus plays a crucial role in deep learning by enabling effective data representation and transformation. The ability to understand and manipulate tensors allows researchers and practitioners to design and implement sophisticated neural networks that can learn from vast amounts of data. Historical development illustrates that as computational capabilities improved, the applications of tensor calculus expanded, demonstrating its versatility across various domains.

Historical Development of Tensor Calculus in Mathematics

The evolution of tensor calculus is marked by significant milestones and contributions that have shaped its current understanding. Early formulations by Ricci-Curbastro and Levi-Civita provided the necessary tools to work with tensors, which were later adopted in the theory of relativity by Einstein. Over the decades, the formalism of tensor calculus has been refined, leading to its integration into various scientific fields, including deep learning.

The Role of Tensors in Deep Learning

Tensors serve as fundamental data structures in deep learning, enabling the representation of complex datasets in a format suitable for computational processing. In practice, deep learning models utilize different types of tensors to handle varying dimensions of data.

Utilization of Tensors as Data Structures

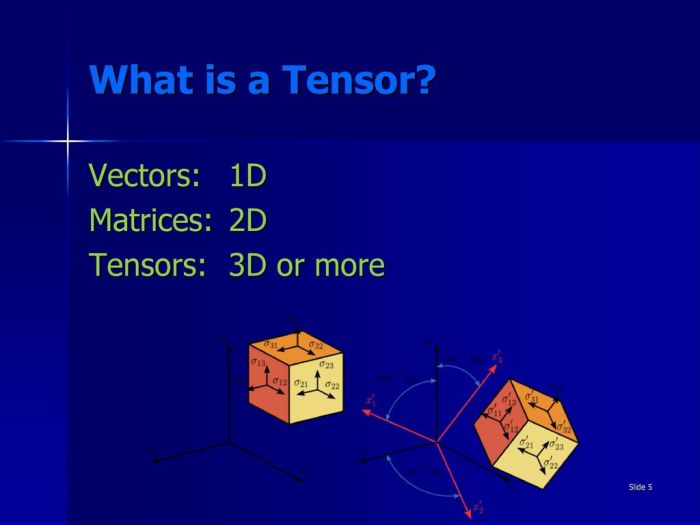

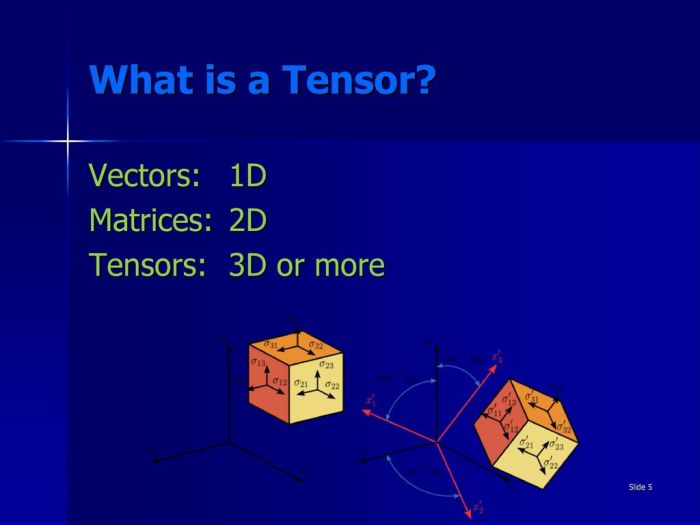

Tensors can be categorized based on their dimensions:

- Scalars: Zero-dimensional tensors, representing single values.

- Vectors: One-dimensional tensors, used to represent ordered lists of numbers.

- Matrices: Two-dimensional tensors, commonly used in data transformations and linear algebra.

- Higher-Dimensional Tensors: Multi-dimensional tensors, essential for representing complex data like images, videos, and multi-channel datasets.

Operations on Tensors During Model Training

During model training, various operations are performed on tensors to optimize neural networks. These operations include:

- Element-wise operations: Such as addition and multiplication, which are crucial for calculations in neural networks.

- Matrix multiplications: Fundamental for layer transformations in neural networks.

- Tensor reshaping: To adjust the dimensions of tensors as needed for various computations.

Mathematical Foundations of Tensor Calculus

Understanding the mathematical principles behind tensor calculus is essential for effectively using it in deep learning. Key concepts include tensor operations, which form the basis for manipulating multi-dimensional arrays.

Key Mathematical Principles Underpinning Tensor Calculus

The fundamental operations of tensor calculus include addition, multiplication, and contraction. These operations allow for the combination and transformation of tensors to extract meaningful information.

Illustration of Tensor Operations

Here are some of the basic tensor operations:

| Operation | Description | Example |

|---|---|---|

| Addition | Combining tensors of the same shape | A + B = C |

| Multiplication | Element-wise multiplication of tensors | A

|

| Contraction | Summing over a specific dimension of a tensor | Tr(A) = Sum_i(A_ii) |

Applications of Tensor Calculus in Neural Networks

Tensor calculus enhances the performance and efficiency of neural networks through optimized mathematical modeling and operations.

Enhancing Neural Network Architectures

The application of tensor calculus allows for the development of more sophisticated neural network architectures that can handle complex data relationships. By leveraging tensor operations, networks can efficiently learn from high-dimensional data, improving accuracy and performance.

Application in Backpropagation Algorithms

Backpropagation, the cornerstone of training neural networks, relies heavily on tensor calculus. The gradients calculated during this process are essential for updating model weights, ensuring that the network learns effectively.

Case Studies of Successful Implementations

Several real-world applications demonstrate the successful use of tensor calculus in deep learning. For instance, in image recognition tasks, convolutional neural networks (CNNs) utilize tensor operations to efficiently process and classify images, resulting in state-of-the-art performance in various benchmarks.

Relationship Between Tensor Calculus and Exact Sciences

Tensor calculus is integral to the framework of exact sciences, providing precise mathematical tools to model complex systems.

Implications of Precise Mathematical Modeling

The ability to model complex systems with high accuracy has significant implications for the development of advanced deep learning solutions. Accurate models lead to more reliable predictions and insights across various fields.

Distinctions Between Exact and Applied Sciences

To clarify the relationship between tensor calculus and sciences, the following table highlights key distinctions:

| Aspect | Exact Sciences | Applied Sciences |

|---|---|---|

| Focus | Theoretical understanding | Practical applications |

| Methodology | Mathematical modeling | Experimentation and implementation |

| Tensor Calculus Role | Framework for models | Tool for solutions |

Advanced Topics in Tensor Calculus for Deep Learning

Delving into advanced tensor operations offers deeper insights into optimizing deep learning models.

Insights into Advanced Tensor Operations

Advanced operations such as tensor decomposition and factorization allow practitioners to simplify complex tensor computations, enhancing efficiency in model training and inference.

Challenges and Limitations

Despite its strengths, tensor calculus poses challenges, particularly regarding computational complexity and memory requirements when dealing with large-scale data. Addressing these limitations is crucial for future deep learning advancements.

Resources for Further Learning

For those interested in exploring advanced tensor calculus concepts, a curated list of resources includes:

- Books on Tensor Calculus and Machine Learning

- Online courses covering TensorFlow and PyTorch

- Research papers on cutting-edge applications of tensor calculus in AI

Future Trends in Tensor Calculus and Deep Learning

The future of tensor calculus in deep learning is poised for exciting developments driven by technological advancements.

Predictions for Future Developments

Innovations in hardware and algorithms are expected to enhance the capabilities of tensor calculus, leading to more efficient deep learning models. For example, quantum computing may revolutionize tensor operations, enabling computations that were previously considered infeasible.

Impact of Emerging Technologies

As technologies such as edge computing and neuromorphic computing evolve, they will significantly influence how tensor calculus is applied in real-world scenarios. This could lead to more efficient and scalable deep learning applications.

Innovative Research Directions

Research combining tensor calculus with emerging fields such as explainable AI and generative models holds great promise. Exploring these intersections will likely yield new insights and methodologies that push the boundaries of what deep learning can achieve.

Concluding Remarks

In summary, grasping tensor calculus opens up a world of possibilities in understanding and designing deep learning models. As we venture into advanced topics and future trends, the importance of tensor calculus in this field becomes increasingly apparent. It is not just a mathematical tool; it shapes the future of artificial intelligence and machine learning.

Popular Questions

What is a tensor?

A tensor is a mathematical object that generalizes scalars, vectors, and matrices, allowing for complex data representation in multiple dimensions.

Why is tensor calculus important for deep learning?

Tensor calculus provides the mathematical framework needed to perform operations on multi-dimensional arrays, which are essential for training deep learning models.

How do tensors differ from regular arrays?

Tensors can represent data in higher dimensions and have specific mathematical properties that regular arrays do not, making them more suitable for complex computations in deep learning.

What are some common operations performed on tensors?

Common operations include addition, multiplication, contraction, and reshaping, all of which are vital for the manipulation of data in neural networks.

How can I learn more about tensor calculus?

There are many online courses, textbooks, and resources available that focus on tensor calculus, particularly in the context of machine learning and deep learning.